Selecting The Right Testing Scenario

Selecting the right test scenario is as crucial as selecting scenarios for training your person detection solution. Your test scenario would be on your actual target requirement, I.e., how you will utilize your people detection solution. So basically, your training scenario and testing scenario should be more or less similar to achieve good performance.

Here, the test scenario selection involves:

1. Camera Parameters

2. Target Environment

3. Target Scale

Camera Parameters

A mathematical relationship between 3D point coordinates of a scene where the light originates and image plane 2D projection coordinates.

The camera Parameters can be of two types:

- Intrinsic or internal

- Extrinsic or external

Intrinsic Camera Parameters:

Internal parameters are the parameters that are internal to the camera itself, such as focal length and lens distortion. The sensor size and its focal length determine the number of pixels occupied by an object (for our case, People) on the image. These parameters and target scale are linked.

Extrinsic Camera Parameters:

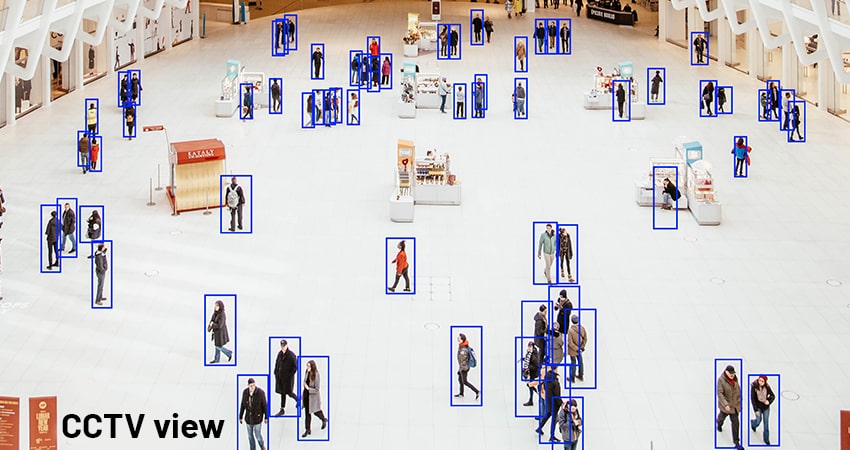

Otherwise known as external parameters or camera pose are external to a camera, such as a camera’s orientation concerning the reference coordinate system. Based on the target requirement, one should keep the camera view accordingly. The person’s features in the frontal view will differ from the CCTV view. Hence, during training, one should carefully select the dataset based on the requirement, significantly impacting the training accuracy.

Target Environment

Suppose your target environment is a mine and you need to detect persons in mines, then your test data would be specific to a miner, I.e., miners might be wearing safety wears which might be difficult for a standard pedestrian detection algorithm to detect. The same goes with lighting conditions, attire in different geographic locations, etc. So, you need to keep in mind your target environment for your testing.

Target Scale

Refers to the scale on which you want to detect your objects. In one sense, this is more related to the camera parameters as well. If you have trained your model for some scale of objects, we must maintain testing on the same scale. For example, suppose your model is trained only for larger objects (I.e., objects closer to the camera), then your model will not perform well for smaller objects (I.e., farther objects) because the model didn’t come across a more distant view of the objects during training. The target scale is one important factor you have to consider according to your specific use case. You can take this case in another way; suppose your target camera FoV is wide, then the objects will be smaller, but you might not get good test results if you have trained for narrow FoV images. So this way, it is related to the camera parameter.

Another point worth mentioning here is that your target model could be for scale variance, I.e., the model detects people for all the object scales. Though it is not suggestible as targeting a more expansive scale may take the edge off the accuracy, it is possible based on your use-case criticality.

Selecting The Right Testing Methodology

Now we know that we have to select a test scenario as per the specific use case. The next question is how we can perform testing after choosing the scenario.

There are commonly two ways to perform testing:

1. Dataset Based Testing

2. Real-Time Deployment Testing

Dataset-Based Testing

Dataset-based testing is the conventional approach of testing any object detection model. This method involves an enormous effort of generating Ground Truth (GT) for the test dataset. Once the GT is developed, testing the prediction result is just a click away. There are metrics available, such as Average Precision (AP) or mAP (mean Average Precision), as defined in the previous blog to compute the accuracy metric.

The dataset on which you will test the model should not be a part of training and validation datasets; This is because the model already saw those datasets in the training stage; testing on the same will give a higher mAP, but it won’t provide us with confidence that it will work better on unseen data. So, we have to collect the separate dataset for testing so that it is from the same domain as training and validation datasets but not a part of training and validation datasets.

Also, getting the metric (such as AP or mAP) is insufficient to understand the model better. For example, to improve the mAP, you might unknowingly increase the false positives that are not good for the real environment model. So, these metrics are helpful for quality assurance during model development, but in addition to that, we have to do some definitive tests for behaviors that we expect our model to follow. So, both metric and explicit tests will help in building high-quality models.

So, the question here is what explicit tests should we do to understand the model better. So, there are different types of model tests that we can use to understand the behavioral attributes of a model.

1. Invariance Tests

2. Minimum Functional Tests

Invariance Tests: To check the model’s predictions’ consistency, we can introduce some changes on the input and check how the model reacts to the change; This is more related to data augmentation, where we apply changes to input during training while preserving the ground truth annotations.

With this invariance test, we can check how the model behaves when there are slight input changes. For example, if we have two images containing a person but one image having bright color and another image having a dark color, then our model can detect a person in both images.

Minimum Functional Tests: Minimum functional tests allow us to define a set of test cases with their expected behavior, and this will help us quantify the model performance in different scenarios.

For instance, you want to understand the model behavior when there are occluded persons or crowded scenarios.

Using the above tests and performance metrics, we can build high-quality models and gain more confidence about how they will behave in a real-time environment.

Real-Time Deployment Testing

Another way to test the model, a dataset-based model restricts you to test within a specific set of images, but real-time deployment testing allows you to test over time with different inputs. Though it is not as straightforward as the dataset-based testing approach, you can use this approach to gain more confidence in your model. You cannot get any value metric as an output of this approach; only you can visualize the predictions. But now the question here is how we can test it in real-time?

Since the data processed in real-time is humongous, it is not possible to test the whole data manually, so the approach we propose is,

1. Motion-Based Sample Testing

2. Person Count Based Testing

Motion-Based Sample Testing: Instead of processing every frame from the stream, we can use motion sensing. Meaning we can run our model and check its prediction correctness only when there is a motion detected. This way, we can reduce the human effort to monitor the system continuously.

There are algorithms available to detect motion. Background Subtraction is one such algorithm to identify moving objects. As the name suggests, background subtraction separates foreground objects (moving objects) from the background (that does not move in an image) in a sequence of frames. It calculates the foreground mask by performing a subtraction between the current frame and background. We can use those algorithms to detect motion and then visualize only those frames in which person is in motion to see our prediction.

Person Count Based Testing: Another approach is to count the number of persons detected by our model against some ground truth. This approach requires integrating a tracking solution, and they can link results to the tracker’s accuracy.

For ground truth, we prefer infrared sensors or any similar sensors for people counting, which will tell the number of people crossed (when a person passes that sensor, it will get incremented by 1, the assumption here is the infrared sensor is perfect; it will not miss any person). We need to compare this count against our model’s predicted person count.

To count the number of persons in the application, we need to draw an imaginary line in the frame (the same region covered by the IR sensor). If a person crosses the line, then in our application, the count will increment by 1.

From the output, we can infer:

- Our model did not miss any prediction if the model predicted count matches the ground truth count from the IR sensor.

- If the model count is lesser than the ground truth, that means our model missed some prediction.

- If the model count is more than ground truth, that means our model predicted additional false positives.

There are some limitations present in this approach as the reference sensor also may miss some counts, so this requires a manual verification of each event from both sides to confirm it.

In any case, testing people detection solutions requires massive manual efforts and an understanding of metrics.